The long-held fantasy of AI that writes its own upgrades has officially left the sci-fi shelf and landed squarely in a GitHub repository near you. While the idea of self-evolving agents has been percolating for a while, a new crop of open-source projects is turning the concept into a practical, if slightly unnerving, reality. Leading the charge are MetaClaw, a framework for agents that create new skills from failures, and AutoResearch, a minimalist tool from AI luminary Andrej Karpathy that puts LLM development on autopilot.

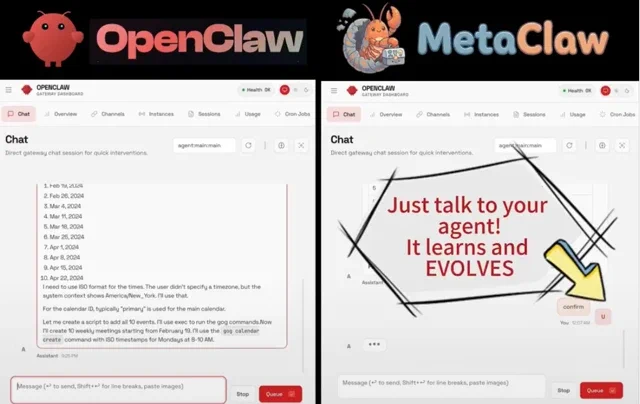

MetaClaw, developed by the AIMING Lab at UNC-Chapel Hill, is designed to learn directly from live conversations with users. Instead of waiting for massive offline retraining, MetaClaw analyzes failed interactions and uses an LLM to automatically generate new “skills” to prevent the same mistake from happening twice. The system essentially allows an agent to evolve over time by learning from its own blunders—a feature many humans are still waiting for in a software update. The entire project is detailed on its Hyperlink: MetaClaw GitHub repository.

Adding fuel to the fire is Andrej Karpathy, the former head of AI at Tesla and a founding member of OpenAI. He recently open-sourced AutoResearch, a brilliantly simple framework that lets an AI agent autonomously conduct machine learning experiments. The agent modifies the training code, runs a short five-minute experiment, evaluates the results, and then decides whether to keep or discard the change before starting the next cycle. As Karpathy drolly noted, the era of “meat computers” doing AI research may be fading. The project is available on the Hyperlink: AutoResearch GitHub repository.

The idea isn’t entirely new, with some developers like Máté Benyovszky noting their work on “second generation” self-evolving agents as early as February 2026. However, the arrival of robust, open-source frameworks signals a major inflection point.

Why is this important?

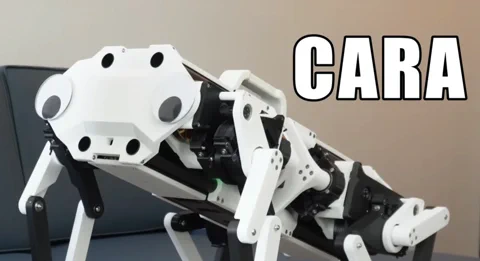

Static AI models that are obsolete the moment they’re deployed are a massive bottleneck. Self-evolving agents represent a fundamental shift from deploying a finished product to releasing a system that can continuously adapt and improve in the real world. For robotics, the implications are staggering. Instead of painstakingly programming every possible action and exception, a robot could learn new physical skills by itself after failing a task. This is the difference between an appliance and a truly autonomous system, and it looks like the tools to build that future are finally arriving.